Automation

Since Tintri VMstore systems let you focus at the VM level, automating tasks such as replication policies is much simpler. When automating storage policies for a VM, they are executed natively on the storage. The operational overhead of these tasks is minimal, as is the effort required to automate them.

To summarize Tintri benefits:

- Tintri makes storage simple, so you can spend more time automating and less time worrying about storage details such as

- Tintri gives you a VM-focused view, as well as a forward-thinking vision to integrate with public cloud services where

- Tintri facilitates automation via plugins and REST APIs

With the Tintri REST API, any automation tool can invoke Tintri-specific functions.

The Tintri snapshot capability is extremely useful for protecting VMs. An app developer can take a snapshot of a VM before an application push, or a Windows server engineer could snapshot hundreds of servers before they get patched.

Tintri snapshots operate on the VM itself, make very efficient use of data, and do not impose any performance overhead. Using the vRealize Orchestrator Snapshot Workflow, you can offer this capability as part of your service catalog or as part of a larger workflow.

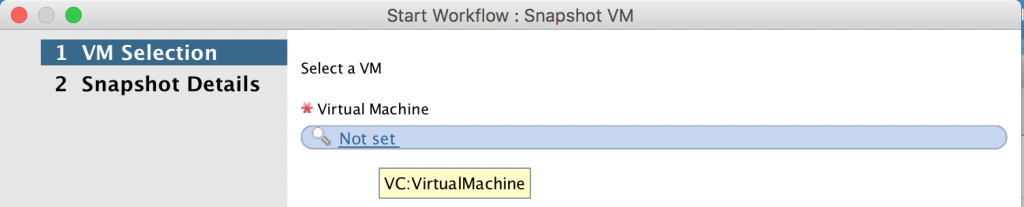

The vRealize Orchestrator Snapshot Workflow is ready to use out of the box and requires a minimal amount of input to execute. In the first step, the user selects the VM from vCenter, as shown in Figure 77.

Figure 77. How to use the vRealize Orchestrator workflow.

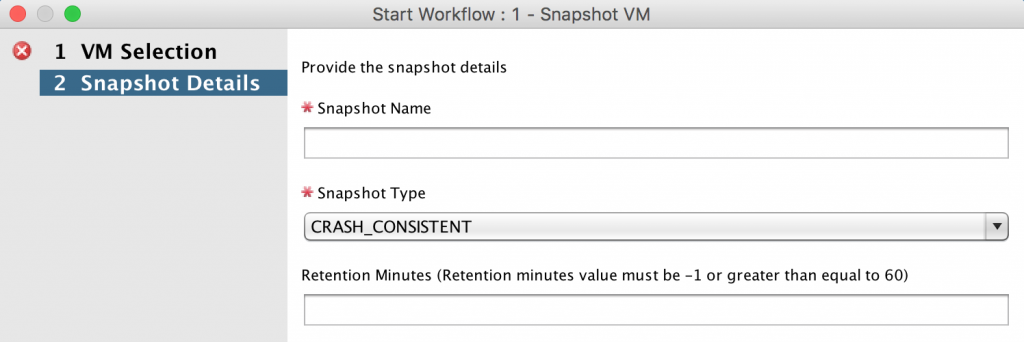

In the second step, the user enters some additional information about the snapshot:

- Snapshot name: name of snapshot as it will appear on the Tintri VMstore

- Snapshot type:

- Crash-consistent

- VM-consistent

- Retention Minutes: number of minutes to keep the snapshot

You can find application-specific information on choosing VM-consistent versus crash- consistent snapshots at tintri.com/company/resources. The Tintri best practice guides you’ll find there often give guidance on this setting for specific applications.

Figure 78. Snapshot details, including the type.

You should verify that the Snapshot Workflow executes successfully against a test VM (Figure 78). Then you can allow users to access and use it as-is, or create a workflow that incorporates the Snapshot Workflow.

Per-VM Isolation in a Self-Service Environment

Being able to set QoS at the VM level from a native storage perspective is unique to Tintri. You can guarantee each application its own level of performance and protect others by limiting their performance as well.

These capabilities change the way you approach the tiering of storage. No longer do you need to plan out your storage-based tiers on different pools of storage capabilities. You can use the same pool of storage, but have distinct levels of service based on the settings that make the most sense for your organization. When automation is combined with this feature, it opens up capabilities for both enterprises and service providers.

For enterprises, this feature gives you the ability to utilize a self-service portal to offer multiple performance levels. For most workloads, the default setting (no QoS assigned) allows the Tintri array to automatically adjust performance per VM. Workloads which need guarantees or specific limits can be configured to have these assigned per VM.

The option to expose QoS through a self-service portal is as simple as a dropdown. Contrasting this with traditional storage, VMs are required to be placed in the LUN or volume that matches the required storage tier. This requires decision workflows to determine if there is enough storage on a certain tier, or if policies for protection match that tier. Tintri VMstore systems eliminate the need for these workflows and decisions, because QoS and protection configuration are done at the VM level.

Service providers can build service tiers where customer VMs automatically get a specific maximum throughput. This gives you the option of charging for guaranteed IOPS. By utilizing logic at the automation tier, customers can automatically be placed into per-VM configurations that limit the maximum amount of IOPS. If customers would like to have their VM modified for a higher tier of storage performance, their actual blocks of storage do not need to be migrated to that tier, as they can be instantly adjusted to the level of performance they desire. See Figure 1 for more.

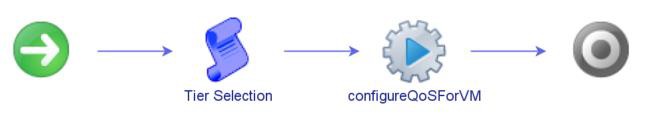

To configure this in vRealize Orchestrator, a simple scriptable task can be utilized to set the variables that will configure these tiers. The scriptable task is put in front of the main action, as shown in Figure 79.

Figure 79. The workflow for configuring QoS.

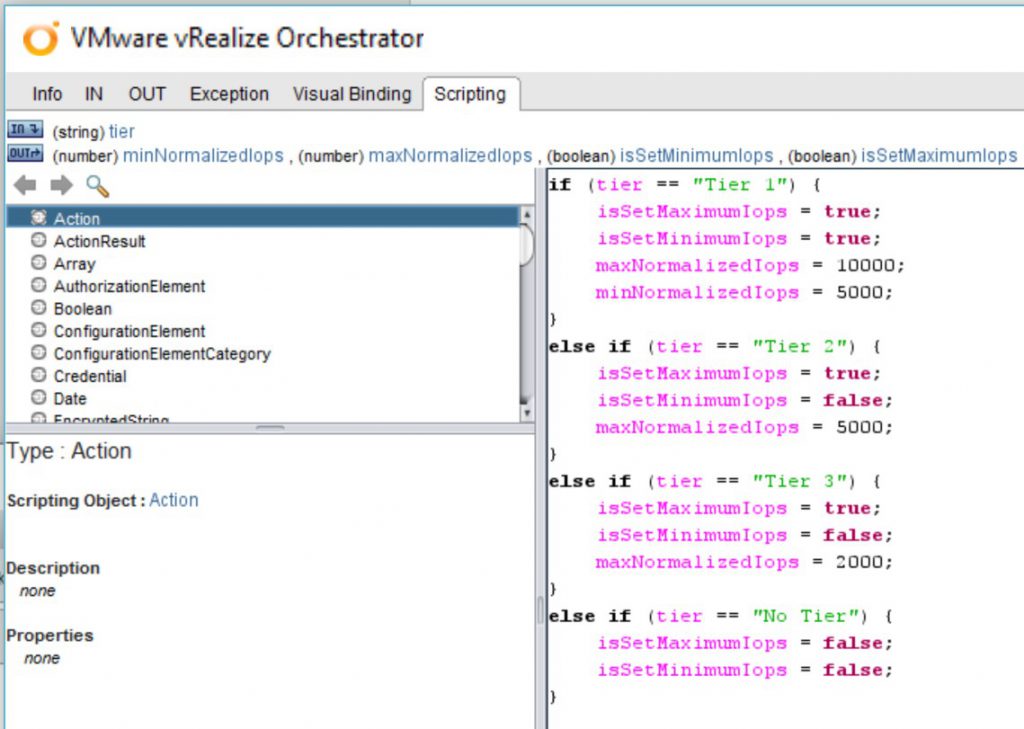

A series of if /else if decisions set the necessary variables, which are then passed into the action (see Figure 80).

Figure 80. Setting variables for QoS, using “if” and “else if” statements in vRealize Orchestrator.

These tier options match the sets of options in the table above. The one difference is also setting the variables for whether or not the IOPS are being set for Min/Max.